A $385B liability vacuum: The agentic commerce questions financial institutions haven’t answered

Agentic commerce trends don’t leave much room for a wait-and-see posture.

One in five orders last Cyber Week was placed by AI agents, totaling roughly $70 billion in a single shopping period. Morgan Stanley projects $385 billion in U.S. digital commerce driven by agentic AI by 2030—up to 20% of all e-commerce, larger than the entire U.S. e-commerce market in 2011. 57% of executives expect agentic commerce to go mainstream within three years.

Agentic commerce involves autonomous AI agents that research, negotiate, and complete purchases on behalf of users, shifting the model from “search and click” to “ask and buy.” The technology isn’t waiting. The liability framework—think chargeback rules, fraud detection systems, dispute resolution processes—was built for a world where a human being made a deliberate decision at the moment of purchase. Agentic AI systems break that assumption at the foundation. And the financial institutions, merchants, acquirers, and issuers scrambling to figure out what replaces it are mostly watching each other.

That coordination gap is where the real exposure lives. It’s also where the most valuable agentic commerce research for financial institutions happens, and where panel-based data is essentially useless.

The assumption that breaks everything

Every fraud risk framework financial institutions rely on traces back to one premise: Intent was human, deliberate, and present at the moment of purchase.

Agentic AI systems invalidate all three simultaneously. The “intent” is encoded in API scopes set months earlier. The purchase executes automatically, long after the original authorization. The actor that pulled the trigger was software.

53% of U.S. firms say they’d allow AI agents to negotiate directly with other AI agents. Nearly 40% of Americans have already made a purchase they wouldn’t have considered without one. The behavior is already here. The rules for what happens when it goes wrong are not.

Three liability questions are driving exposure across the financial sector, and none have clean answers yet.

Who eats the loss?

When an AI-initiated transaction goes sideways, existing fraud risk frameworks don’t map cleanly. Is it card-present payment fraud? Card-not-present payment fraud? An authorized-but-disputed transaction? The answer determines who bears the loss. But no one has agreed on which category applies to an agent-initiated purchase.

Issuers are watching merchants for chargeback positions. Merchants are watching networks for authorization guidance. Acquirers are waiting for fraud prevention standards to emerge. The agents are executing transactions. The contracts assigning responsibility remain unsigned. Visa processed over 106 million disputes globally in 2025, up 35% since 2019. Layering agentic commerce on top of that without resolving liability turns a scaling challenge into a structural one.

The most revealing agentic commerce research for financial institutions doesn’t come from measuring adoption rates. It comes from asking each stakeholder group what they expect of the others. When merchants assume issuers will publish chargeback guidance before investing in agent authentication—and issuers assume merchants will implement bot detection before committing to new authorization frameworks—you’ve identified a coordination failure. That’s where friction, loss, and stalled investment concentrate first. It’s also exactly what a panel of self-reported financial professionals won’t surface.

The fraud threat nobody has solved

The emerging threats in agentic commerce aren’t hypothetical. Payment fraud prevention in this environment requires capabilities the banking industry largely hasn’t built yet.

AI agents can open accounts, move money, and impersonate legitimate cardholders — continuously, at machine speed, near-zero marginal cost. Synthetic identity fraud, already one of the fastest-growing fraud risk categories, becomes significantly harder to detect when the actor is an agentic AI system rather than a human gaming a screener.

The numbers reflect the stakes. The FBI reported $16 billion in internet-enabled crime losses in 2024, up 33% year-over-year. Deloitte estimates generative AI could drive $40 billion in annual bank and consumer losses by 2027.

Traditional fraud detection methods are insufficient for managing the rapid decision-making of AI agents. Today’s infrastructure of KYC files, SARs, and case-based investigation wasn’t built to flag suspicious transactions initiated by a legitimate-looking agentic AI system operating at machine speed across multiple financial institutions. Adaptive fraud detection requires verified behavioral data from actual agentic commerce environments, much like advanced marketing teams are beginning to rely on AI-driven synthetic customer simulations to test and refine go-to-market strategies. Advanced AI models that reduce false positives improve customer experience by minimizing unnecessary transaction declines, but only when trained on verified signals from real agentic AI systems, not proxy populations.

The consent problem and regulatory requirements

Classic card payments make consent synchronous. You tap, you pay, you’re accountable for a decision made with full awareness.

Agentic commerce makes consent asynchronous, layered, and delegated. You authorize your AI once under terms buried in a permissions screen, and it negotiates with other AI agents on your behalf for months. Whether you consented to a specific non-refundable booking, or a procurement order outside normal parameters, is genuinely unresolved.

Regulatory requirements haven’t caught up. But regulators have signaled that procedural compliance isn’t sufficient when outcomes look unfair. Policy shifts around platforms like Zelle show courts increasingly holding financial institutions accountable for payment fraud outcomes even when standard benchmarks were met. Agentic commerce will stress-test that at a scale those cases never approached.

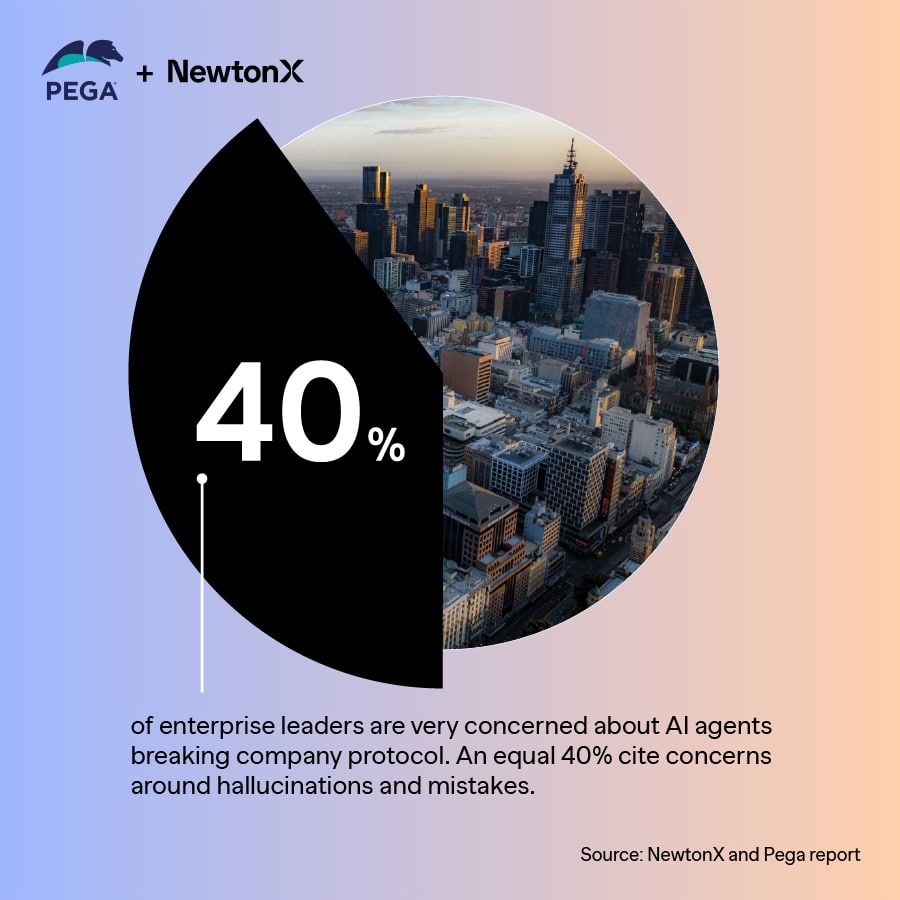

The ethical considerations run deeper than compliance. As AI agents assume more autonomous roles in financial services, governance frameworks must evolve to prevent bias, hallucinations, and regulatory requirements violations. Effective governance frameworks require structured decision making trails, robust data curation, and human-in-the-loop oversight, giving financial professionals the ability to interrogate AI-generated outputs and override decisions when necessary.

Reducing risk: Four moves forward-leaning institutions are making

Most organizations in the financial sector are in watch-and-see mode. The ones reducing risk are treating the liability gap as a design problem through the following:

- Map your exposure before regulators do. Inventory every AI-mediated touchpoint in your customer journeys. For each, document who currently holds liability under existing contracts. Run misfire scenarios like wrong merchant, duplicate order, synthetic identity fraud attempt, and explicitly assign who absorbs the loss.

- Build agent authentication as a first-class fraud prevention control. Real-time AI monitoring of agent behavior for anomalies like suspicious transactions, out-of-scope purchases, and unusual negotiation patterns is the payment fraud prevention infrastructure this market requires. Think KYC for agents, layered on top of KYC for humans.

- Redesign consent for an asynchronous world. “Can book domestic travel up to $2,000/month on this card” is a real authorization. Generic “travel permissions” buried in a terms document is not. Time-bound scopes and plain-language permissions give users confidence they’re delegating safely. Agentic AI systems enhance customer experiences in financial services through real-time decision making, but only when the consent architecture earns user trust.

- Evolve your governance frameworks before you need them. Financial institutions that build auditability, human oversight, and structured decision making trails now will define the category. The ones waiting will inherit whatever standard the early movers establish.

The research advantage in an unwritten market

The competitive advantage in agentic commerce isn’t going to the financial institutions with the most data. It’s going to the ones talking to the right financial professionals while everyone else reads the same trade coverage.

Payment network executives building trusted agent protocols. Risk leaders at top-10 issuers reworking fraud detection playbooks. Corporate treasury heads deciding where their first agentic payment bets land. These are small, senior, hard-to-reach populations—ones who don’t show up in general panels, and whose positions will determine how regulatory requirements and liability frameworks actually get written.

Agentic commerce research for financial institutions at this level requires verified access to those conversations, similar to how targeted executive surveys on generative AI adoption can cut through hype and reveal real-world use cases. The gap between what those insiders are planning and what the market assumes they’re planning is where financial services strategy gets made.

When hundreds of billions in AI-driven digital commerce start flowing through unresolved liability structures, the financial institutions that understood the gaps before they became losses will be the ones setting the terms. Everyone else will be on hold, waiting for someone to pick up.

![[Webinar Recap] Is B2B ready for synthetic sample? Yes – if you know how to augment it](https://www.newtonx.com/wp-content/uploads/2024/10/Webinar-synthetic-sample-greenbook-1024-1920px-feature-image-1-300x169.jpg)

![[Webinar Recap] The future of B2B research starts with the death of panels](https://www.newtonx.com/wp-content/uploads/2024/07/TKH-8.22-Karine-Pepin-webinar-1920px-feature-image-300x169.jpg)

![[Webinar Recap] Ditch the Bad Data with Greenbook’s Lenny Murphy as Your Guide](https://www.newtonx.com/wp-content/uploads/2024/05/tkh-greenbook-newtonx-lenny-murphy-cutting-research-cost-speakers-v-21920px-feature-image-300x169.jpg)